Trust / Artificial Intelligence / 2015-present

OpenAI and the Research Brand That Had to Become a Deployment Platform

OpenAI's brand moved from research-lab promise to mass deployment pressure as ChatGPT, APIs, models, safety work, and developer tools made the company a public AI infrastructure brand.

Short Answer

OpenAI and the Research Brand That Had to Become a Deployment Platform is a trust case about OpenAI in 2015-present. A research organization became a consumer, developer, and enterprise platform at once, making safety, capability, product reliability, and public trust part of the same brand system. AI company brands cannot stay in research language after mass deployment. Once the models shape work, media, coding, search, and education, trust has to be operational, productized, and repeatedly explained.

Key Takeaways

- OpenAI is a trust case because its brand now sits between research ambition and deployed public infrastructure.

- The mission language creates authority, but product behavior creates daily trust or distrust.

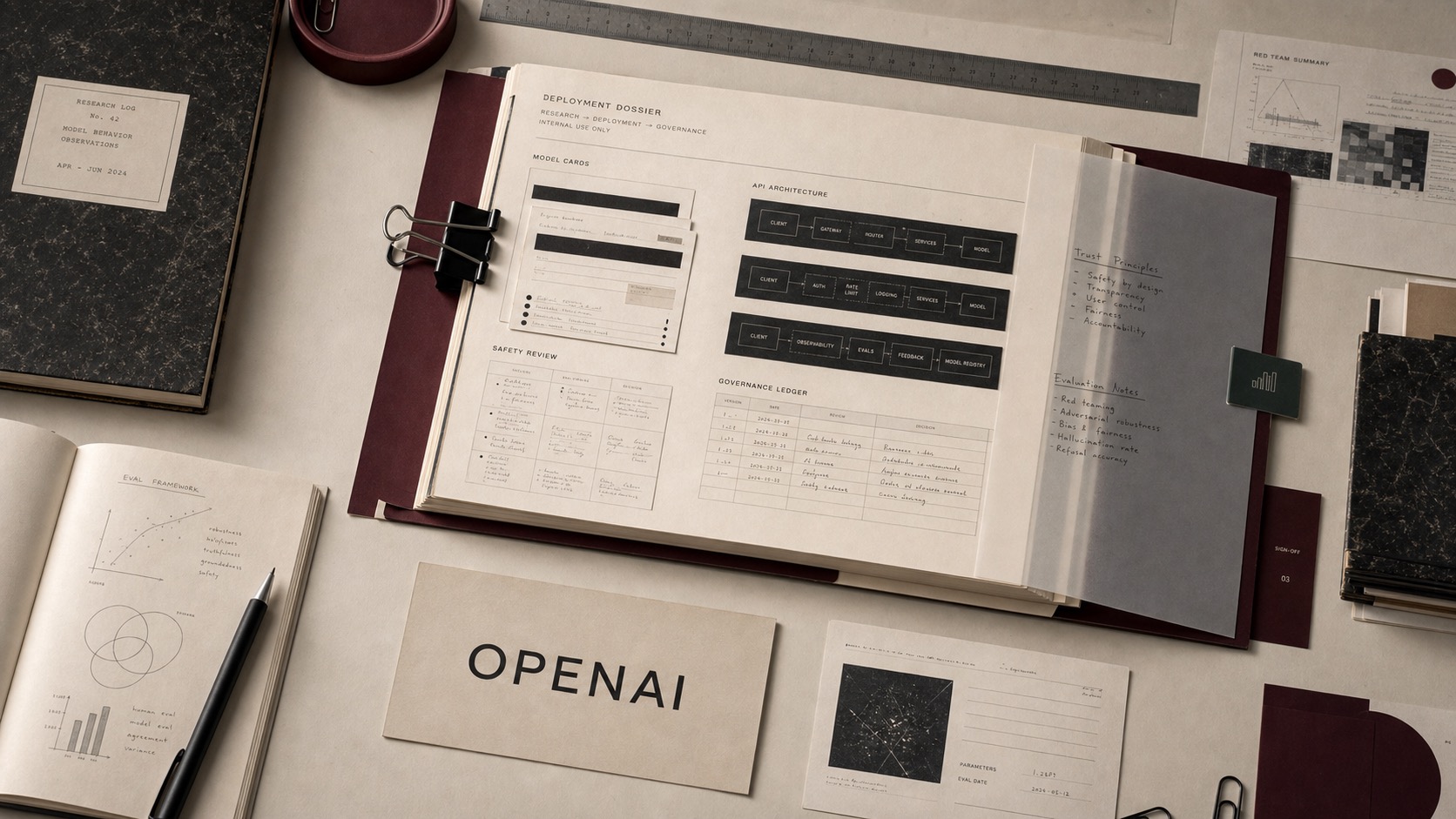

- APIs, ChatGPT, model releases, safety notes, and developer tools all act as brand surfaces.

- The operator lesson is that high-capability brands need governance people can inspect, not only ambition people can admire.

The Decision Context

OpenAI began with the authority language of research and a mission around broadly beneficial artificial intelligence. That gave the organization a public frame larger than any one product. The strategic problem changed when models stopped being primarily lab artifacts and became tools millions of people could use.

At that point, OpenAI's brand was no longer only about what the company was trying to build. It was about whether the company could deploy powerful systems responsibly across consumer, developer, enterprise, and public-information contexts.

From Lab Signal To Platform Signal

ChatGPT made OpenAI legible to the mass market. The API platform made it legible to developers and companies. Model releases made it legible to researchers and competitors. Safety and policy communication made it legible to institutions that needed to understand risk.

Those surfaces now reinforce or weaken each other. A model launch, product outage, safety update, developer tool, pricing change, or policy decision all teaches the market what OpenAI is. That is the shift from lab brand to platform brand.

The Trust Burden

High-capability AI brands carry a different trust burden from ordinary software brands. Users are not only asking whether the tool works. They are asking whether the system is accurate, controllable, secure, aligned with policy, and safe enough to use inside consequential work.

That is why OpenAI belongs in the archive as a trust case. The company's brand is built through capability, but it is defended through governance, developer documentation, safety practices, and product reliability.

The Archive Reading

OpenAI's brand strength comes from making frontier AI feel usable. Its brand risk comes from the same fact. When a company turns research into infrastructure, every failure travels faster because the tool is already embedded in work habits.

For operators, the lesson is to treat deployment as brand architecture. If the product changes how people write, code, search, learn, or decide, the brand must explain not only what the model can do, but how the company governs what happens after release.

Comparable Cases

Sources

Frequently Asked Questions

What is the short answer for OpenAI?

OpenAI and the Research Brand That Had to Become a Deployment Platform is a trust case about OpenAI in 2015-present. A research organization became a consumer, developer, and enterprise platform at once, making safety, capability, product reliability, and public trust part of the same brand system. AI company brands cannot stay in research language after mass deployment. Once the models shape work, media, coding, search, and education, trust has to be operational, productized, and repeatedly explained.

What type of brand decision was this?

OpenAI is filed as a trust case in the Artificial Intelligence category, with the primary decision period marked as 2015-present.

What is the decision lesson?

AI company brands cannot stay in research language after mass deployment. Once the models shape work, media, coding, search, and education, trust has to be operational, productized, and repeatedly explained.

Does the article contain a commercial CTA?

No. Brand Archive article pages do not carry in-article commercial calls to action.